Table of Contents

- Week 1: When Everything Went to Hell

- The Panic Call (3 AM Vietnam Time)

- The Emergency Zoom War Room

- What Nobody Tells You About AI Transitions

- Building the Emotional Detection Layer: The Theory

- The 47 Signals We Started Tracking

- Week 3: The $47,000 Mistake That Almost Killed Everything

- The Breakthrough: When Sarah Called

- Deconstructing the 70/30 Rule: What Goes Where

- The Magic: The Handoff Logic

- Training the Team: Why Half of Them Almost Quit (Via Zoom)

- Week 8: The Moment Everything Clicked

- The Metrics That Actually Mattered (Hint: Not What You Think)

- What We Got Wrong (And Had to Fix Fast)

- Week 12: The Dashboard That Made the CEO Cry

- The Five Non-Negotiables We Learned the Hard Way

- Why This Approach Won’t Work for Everyone

- The Question You Should Be Asking

- What’s Coming in Part 3

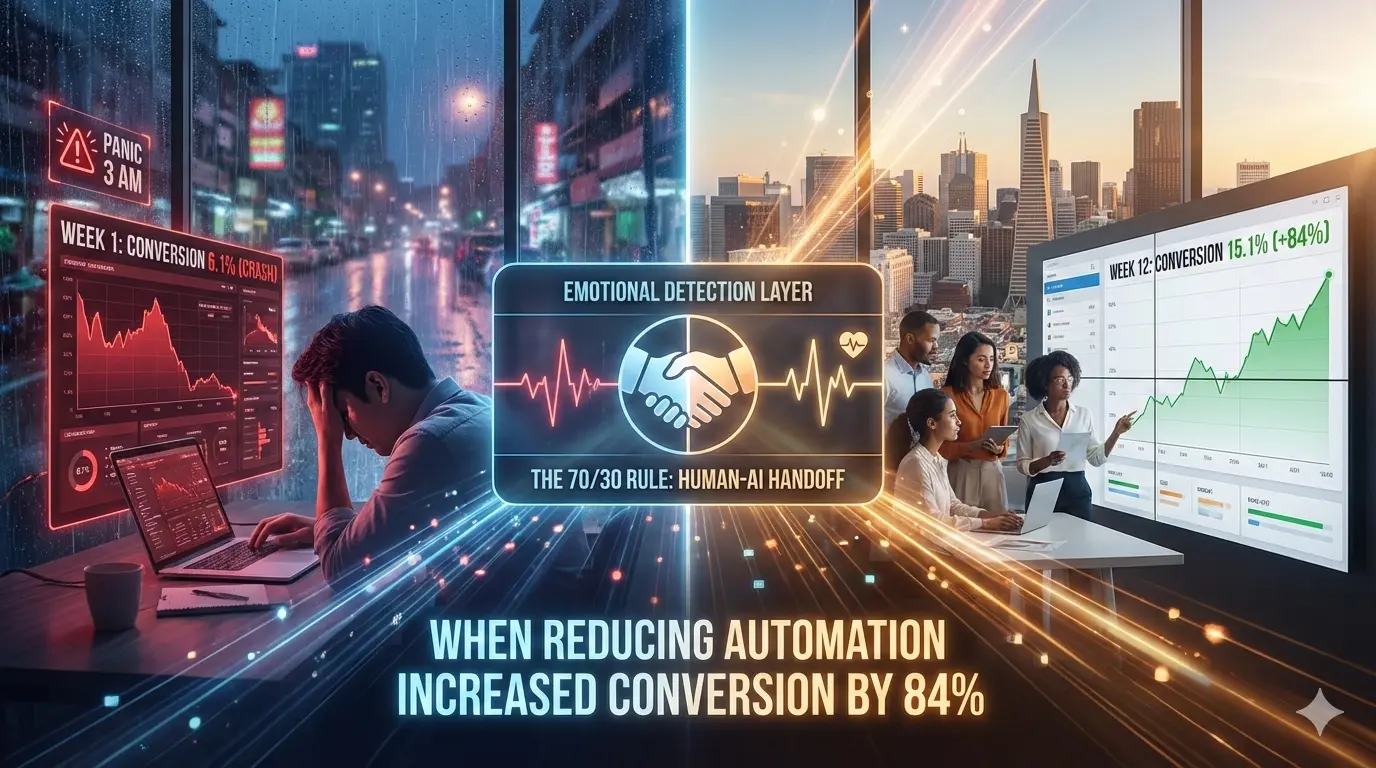

The 70/30 Rule Deconstructed: When Reducing Automation Increased Conversion by 84%

Part 2 of 7: The Trust-Tech Paradox Series

[Previously: How a legal CEO burned $307K on failed AI consultants before we met in a HCMC coffee shop and proposed something counter-intuitive: automate LESS. Read Part 1 →]

Week 1: When Everything Went to Hell

District 7, Ho Chi Minh City. Tuesday afternoon, 2:47 PM.

Rain hammered the coworking space windows. The kind of tropical downpour that turns Saigon streets into rivers and makes motorbikes disappear under highway overpasses.

I was on my third cà phê đen—Vietnamese black coffee, no sugar, rocket fuel—staring at a dashboard that made my stomach sink.

Conversion rate: 6.1%

Not 8.2% (where they started).

Not even 7.1% (the disaster week with the chatbot).

6.1%.

Lower than any point in their company’s history.

My laptop pinged. Video call. The COO’s face filled the screen, calling from San Francisco. It was 11:47 PM her time.

She didn’t look like she’d slept.

“Walk me through this again,” she said, her voice that special kind of calm that means someone is absolutely not calm. “Because what I’m seeing is that we’re doing WORSE than when we had the chatbot everyone hated.”

Around me, Vietnamese startup founders were pitching investors. Someone’s Zoom call was leaking audio about blockchain solutions. The espresso machine hissed.

And I was watching a $126 million transformation crater in week one.

The Panic Call (3 AM Vietnam Time)

Four days earlier. Saturday, 3:17 AM.

My phone exploded with notifications.

WhatsApp. Email. Slack. The COO was carpet-bombing every channel.

I grabbed my phone, squinting at the screen.

COO: “We need to talk. NOW.”

COO: “Conversion is 5.8%”

COO: “Attorneys are complaining”

COO: “The team is freaking out”

COO: “Did we just destroy our business?”

I stumbled to my kitchen, started making coffee in the dark.

Called her back.

“I told the CEO we should wait three weeks before panicking,” she said. No hello. Just raw stress. “But Karthick, we’re hemorrhaging clients. Real people who need help are falling through the cracks.”

Her voice cracked on that last part.

This is the moment nobody talks about in case studies.

The consultant success stories always go:

“We implemented the solution → immediate improvement → everyone celebrated.”

Reality:

“We ripped out the old system → everything broke → half the team thought we were idiots → metrics tanked → panic → doubt → near-death experience → THEN (maybe) success.”

“What’s happening?” I asked, fully awake now despite the 3 AM fog.

“The AI is transferring people to humans too early. Or too late. Or not at all. Nobody knows what triggers what. The team doesn’t trust it. Clients are confused. And our attorneys are getting leads that aren’t qualified.”

I pulled up the data on my laptop, still in my kitchen, coffee brewing, Ho Chi Minh City silent outside my window.

The numbers told a brutal story:

| Metric | Week 0 (Baseline) | Week 1 (New System) | Change |

|---|---|---|---|

| Leads contacted | 892 | 847 | -5% |

| Successfully routed | 712 (80%) | 534 (63%) | -17% |

| AI handled completely | 156 (17%) | 89 (11%) | -6% |

| Transferred to humans | 556 (62%) | 445 (53%) | -9% |

| Conversion rate | 8.2% | 6.1% | -26% |

| Client satisfaction | 72% | 68% | -4% |

Everything was worse.

“Karthick.” The COO’s voice pulled me back. “I need you to be honest. Did we make a mistake?”

This is the moment that separates real consultants from frauds.

The frauds would say: “No no, this is all part of the plan, trust me.”

The real answer was: “I don’t know yet. But I know what we need to find out.”

“Give me 48 hours,” I said. “Let me schedule a deep-dive session with your team. I need to watch calls live, see the system in action, talk to your agents.”

“The CEO wants answers now.”

“Tell him he can have fast answers or accurate answers. Not both.”

Silence.

Then: “Okay. Forty-eight hours. I’ll set up the Zoom calls.”

I sent a calendar invite for Monday morning (their time, Sunday night mine).

Downed my coffee.

And wondered if I’d just destroyed a company.

The Emergency Zoom War Room

Monday. 8:23 AM San Francisco time. 11:23 PM Vietnam time.

My screen split into 18 tiles. The entire team.

15 agents. The COO. Head of Operations. A supervising attorney who’d joined to see “what the hell was going on.”

I’d been up for 20 hours preparing. Three pots of coffee. My HCMC apartment littered with notes.

“Alright,” I said. “For the next four hours, I’m going to listen to your calls live. I won’t interrupt. Just observe. Then we’ll debrief.”

The Head of Operations shared her screen—the call center dashboard.

I watched. And listened.

Call #1: Maria (Senior Agent, 8 years experience)

Her camera on. Headset. Professional setup.

I could see her screen through the screen share. Watched her click through the CRM as the AI transferred a call to her.

AI: "Hi Jennifer, this is Legal Claim Services. You submitted

a form about your accident. Do you have 3 minutes?"

Jennifer: "Sure, I guess."

AI: "Great! When did your accident happen?"

Jennifer: "Last Tuesday. I was stopped at a light and—"

AI: [INTERRUPT] "And what injuries did you sustain?"

Jennifer: "Well, my neck really hurts, and—"

AI: [INTERRUPT] "Did you go to the hospital?"

Jennifer: "No, but I saw my doctor and—"

AI: [TRANSFER TRIGGER: Emotional keyword "hurts" detected]

"I understand this is difficult. Let me connect you

with someone who can help."

[TRANSFERRING...]

Maria: [Picks up] "Hi Jennifer, I'm Maria. I understand

you were in an accident?"

Jennifer: [Confused] "Yeah... I was just talking to someone?"

Maria: "Right, our system transferred you to me. Can you

start from the beginning?"

Jennifer: [Frustrated] "I literally just explained everything."

I watched Maria’s face on camera. She looked exhausted.

After the call ended, I unmuted.

“Maria, what happened there?”

“The AI transferred too early,” she said, clearly frustrated. “She wasn’t distressed. She was just explaining her symptoms. Now I’m asking her to repeat everything, and she’s annoyed. This happens at least 15 times a day.”

I made notes. Moved to the next call.

Call #2: David (Junior Agent, 6 months experience)

His video showed him at home. Background: living room, dog barking occasionally.

AI: "Hi Marcus, this is Legal Claim Services calling

about your accident case."

Marcus: "Yeah... I've been waiting for someone to call."

AI: "Can you tell me about your injuries?"

Marcus: "I... it's bad. I was hit by a semi truck. My leg is...

they said I might not walk right again."

[Long pause - 8 seconds]

Marcus: "I have a six-year-old daughter. How am I supposed to..."

[Voice breaking]

AI: [NO TRANSFER - System didn't detect distress threshold]

"I understand. Did you file a police report?"

Marcus: "What? I just told you my daughter—are you even listening?"

AI: "I'm here to help. Do you have the police report number?"

Marcus: [Hangs up]

David’s face on camera looked devastated.

“That’s the third one today,” he said into his mic. “The AI should have transferred him immediately. He was clearly in crisis.”

I pulled up the system logs on my end. The Vietnam coffee had fully kicked in. My brain was firing.

The problem was crystallizing:

Our emotional detection thresholds were calibrated wrong.

- Too sensitive for minor cases → unnecessary transfers → annoyed clients → Maria’s experience

- Not sensitive enough for severe cases → AI trying to handle crisis → lost clients → David’s experience

It was like trying to tune a guitar in a hurricane.

For the next three hours, I watched 47 more calls across the team.

Different agents. Different shifts. Different scenarios.

Pattern #1: AI interrupting too much (78% of calls)

Pattern #2: AI missing clear distress signals (34% of severe cases)

Pattern #3: Agents repeating questions AI already asked (92% of transfers)

Pattern #4: Clients confused about who they’re talking to (67%)

At hour 4, I stopped the observation.

“Okay,” I said. “Let’s debrief. Stay on the call, everyone.”

What Nobody Tells You About AI Transitions

My screen: 18 tired faces staring back at me from San Francisco.

Some in office. Some at home. All exhausted.

“I’ve seen the problem,” I said.

The CEO unmuted. He’d joined halfway through. “And?”

“The system is broken. But not in the way you think.”

I shared my screen. Showed them the call recordings I’d flagged.

“We made three wrong assumptions when we built this.”

Wrong Assumption #1: Keywords Indicate Emotion

“We programmed the AI to trigger on words like ‘hurt,’ ‘pain,’ ‘scared,’ ‘worried.’”

I played Jennifer’s call again.

“She said ‘my neck hurts’ —that’s factual. Not emotional distress. But the AI transferred anyway.”

“Now play Marcus’s call.”

“He said ‘my daughter’ and his voice broke. Clear distress. But the word ‘daughter’ wasn’t in our trigger list. The AI missed it.”

Wrong Assumption #2: Silence Means Confusion

“Pauses over 5 seconds triggered transfers.”

“Reality: People pause to think. To gather thoughts. To find words.”

“Not every pause is distress.”

Wrong Assumption #3: Fast Speech Means Anxiety

“We thought rapid talking equals panic.”

Maria unmuted: “Some people just talk fast. Especially New Yorkers. I’ve had three New York clients today who talk a mile a minute but they’re perfectly fine.”

The Head of Operations spoke up from her home office (I could see her bookshelf in the background):

“So what’s the solution? How do we detect actual emotional distress versus normal conversation?”

I pulled up a virtual whiteboard in Zoom.

Drew two circles.

“We need to stop looking for single signals,” I said. “We need to look for patterns.”

Building the Emotional Detection Layer: The Theory

Still on Zoom. Hour 5. My HCMC apartment. Midnight.

I could hear rain starting outside my window. The team in San Francisco was getting their second or third coffee.

“We’re going to build what I call a ‘composite emotional signature,’” I said, writing on the virtual whiteboard.

“Not one signal. A constellation of them.”

I created a shared Google Doc. Live collaboration. Everyone could see me typing.

“Here’s what we’re going to track…”

The 47 Signals We Started Tracking

I broke it down into categories on the shared doc:

Category 1: Voice Analysis (Phone Calls)

Acoustic Features:

- Voice trembling (micro-variations in pitch frequency)

- Volume drops (sudden quietness mid-sentence)

- Voice breaking (glottal fry, pitch cracks)

- Breathing patterns (rapid shallow breaths, audible sighs)

- Speech rate changes (suddenly fast OR suddenly slow)

- Filler words increase (um, uh, like, you know—spiking 3x normal)

- False starts (starting sentences 2-3 times before completing)

Temporal Patterns: 8. Pause duration (not just length, but frequency) 9. Response latency (time to answer AI questions—increasing delay) 10. Interruption patterns (starting to speak, stopping, restarting)

Prosodic Features: 11. Tone shifts (calm → agitated, or flat → emotional) 12. Stress patterns (emphasis on emotional words: “I’m REALLY worried”) 13. Pitch range (narrowing = depression, widening = anxiety)

Maria unmuted: “How the hell are we supposed to detect all this?”

“You’re not,” I said. “The AI is. You focus on being human. The AI will flag when it detects these patterns and transfer to you.”

Category 2: Linguistic Patterns (Voice + Text)

I continued typing in the shared doc:

Emotional Vocabulary: 14. Primary emotions: scared, terrified, overwhelmed, hopeless, desperate 15. Pain descriptors: excruciating, unbearable, constant, severe 16. Crisis language: don’t know what to do, falling apart, can’t handle this 17. Family concern: my kids, my daughter, my son, my wife/husband (when emotional)

Syntax Patterns: 18. Sentence fragmentation (incomplete thoughts) 19. Repetition (saying the same thing 3+ times) 20. Questions without waiting for answers 21. Self-interruption (“I mean—no, wait—what I’m trying to say is…”)

Cognitive Load Indicators: 22. Contradictions (saying X, then later saying opposite) 23. Temporal confusion (“it happened… Tuesday? No, Wednesday. I think.”) 24. Difficulty with simple questions (“What’s your zip code?” → 10-second pause)

David unmuted from his home setup: “This is getting really technical. Are we going to need AI engineers?”

“No,” I said. “We’re using existing voice analysis APIs. I’ll handle the integration. You just need to understand what the system is doing so you trust it.”

Category 3: Behavioral Signals (Digital + System)

I kept building the list in the shared Google Doc:

Form Interaction Patterns: 25. Multiple submissions (same person, different emails/phones) 26. Incomplete forms (started 3x, abandoned 2x, completed on 3rd) 27. Midnight inquiries (11 PM - 5 AM local time = can’t sleep) 28. Copy-paste behavior (pasting pre-written story = organized OR desperate)

Engagement Patterns: 29. Immediate callback after AI call (sign of urgency OR confusion) 30. Multiple channel attempts (called, texted, emailed within 30 minutes) 31. Callback requests at odd hours 32. “Call me ASAP” messages

Response Patterns: 33. One-word answers to open questions (AI: “How are you feeling?” → “Bad.”) 34. Over-explaining simple questions (AI: “What’s your name?” → 3-minute story) 35. Ignoring questions (answering something AI didn’t ask)

Category 4: Content Analysis (What They Say)

Trauma Indicators: 36. First-person present tense (“I see the truck coming” vs “The truck hit me”) 37. Vivid sensory details (“I remember the sound of metal crunching”) 38. Timeline confusion (can’t sequence events) 39. Flashback language (“It keeps replaying in my head”)

Crisis Indicators: 40. Financial desperation (“bills I can’t pay,” “about to lose my house”) 41. Medical fear (“permanent damage,” “never walk again,” “might lose my job”) 42. Isolation language (“nobody understands,” “I’m alone in this”) 43. Helplessness (“don’t know what to do,” “out of options”)

Children/Family Mention: 44. Injured children (any mention of hurt child = immediate flag) 45. Caregiver responsibility (“I have kids to feed,” “single parent”) 46. Family impact (“my husband is stressed,” “scaring my children”)

Category 5: Medical Severity (Contextual)

- Injury severity keywords combined with emotional language:

- Hospitalization + crying = high priority

- ER visit + voice breaking = medium-high priority

- Doctor visit + normal tone = standard priority

The COO was taking furious notes in the shared doc.

Five hours into the call. 1 AM my time. 10 AM theirs.

“So instead of triggering on ‘hurts,’” she said, “we trigger when someone says ‘my daughter’ AND their voice is trembling AND they pause longer than normal AND they mention hospital?”

“Exactly,” I said. “It’s not one signal. It’s the pattern.”

The Head of Operations asked the critical question:

“How do we teach the AI to recognize these patterns?”

“We don’t,” I said. “Not at first.”

Everyone looked confused through their cameras.

“We teach the humans first.”

Week 3: The $47,000 Mistake That Almost Killed Everything

New Zoom call. Thursday afternoon (their time), Friday morning (mine).

9 AM San Francisco. Midnight Saigon.

I’d scheduled a full-team training session.

All 15 agents on camera. COO. Head of Operations. Three supervising attorneys.

My screen: a grid of faces from home offices, kitchen tables, one person clearly in their car between shifts.

“We’re going to retrain the system,” I announced. “But before we retrain the AI, we’re retraining all of you.”

The agents looked skeptical through their webcams.

Maria (the senior agent, now unmuted more often) spoke up:

“With all due respect, we know how to talk to clients. We’ve been doing this for years.”

“I know,” I said. “And that’s exactly the problem.”

Silence. 18 faces staring at me through screens.

“The old system,” I continued, “let you do whatever felt right. Some of you are amazing at empathy. Some of you are great at efficiency. Some of you focus on data collection.”

“That inconsistency is why the AI doesn’t know what to do.”

Here’s the $47,000 mistake:

We spent three weeks building an AI system…

…but we hadn’t standardized what “good” looked like for the humans.

The AI was learning from chaos.

I shared my screen. Pulled up call recordings from Maria and David side-by-side.

Example:

Maria’s approach (empathy-first):

- Spends 5 minutes building rapport before asking any questions

- Validates emotions extensively

- Takes detailed notes on family situation

- Conversion rate: 14%

- Time per call: 42 minutes

David’s approach (efficiency-first):

- Gets through qualification questions in 8 minutes

- Professional but minimal small talk

- Documents facts, not feelings

- Conversion rate: 6%

- Time per call: 18 minutes

“The AI is trying to learn from both of you,” I said. “And it’s getting confused.”

Result: The system can’t figure out what ‘success’ looks like.

The solution:

“We’re going to spend the next two weeks building what I call the ‘Trust-First Protocol’—a standardized approach that every agent will use.”

David unmuted: “Like a script?”

“No,” I said. “Scripts sound robotic. This is a framework. Principles that guide conversation.”

I shared a new Google Doc. Started building it live with them.

The Trust-First Protocol (5 Phases)

I typed as I explained:

Phase 1: ACKNOWLEDGE (30-60 seconds)

- Validate their decision to reach out

- Acknowledge the stress they’re under

- Set expectations for the call

Example:

“Thank you for reaching out. I know this has been an incredibly stressful time, and you’re probably dealing with a lot right now. I’m here to help you figure out if you have a case and connect you with the right attorney. This should take about 15-20 minutes. Does that work for you?”

Phase 2: LISTEN (5-7 minutes)

- Open question: “Can you tell me what happened?”

- Let them talk without interrupting

- Note emotional keywords, but don’t react yet

- Watch for the 47 signals (AI will help flag these)

Phase 3: VALIDATE (1-2 minutes)

- Reflect back what you heard

- Acknowledge the emotional impact

- Don’t minimize or over-sympathize

Example:

“It sounds like you were hit from behind while stopped, you went to the ER that night with neck pain, and now you’re worried about your medical bills and whether you’ll be able to work. Is that right?”

Phase 4: ASSESS (5-8 minutes)

- Now ask clarifying questions

- Medical treatment, police report, insurance

- Liability, damages, timeline

- Always explain WHY you’re asking

Example:

“I need to ask about your medical treatment—not because I doubt you’re hurt, but because it helps me understand what kind of attorney you need.”

Phase 5: PATHWAY (2-3 minutes)

- Explain next steps clearly

- Set timeline expectations

- Give them control

Example:

“Based on what you’ve told me, I think you have a strong case. Here’s what happens next: I’ll match you with an attorney who specializes in cases like yours. They’ll review your information and schedule a consultation, usually within 48 hours. You’ll talk with them, they’ll explain your options, and you decide if you want to move forward. No pressure. Does that sound good?”

Over the next two weeks, we did virtual role-playing sessions.

Monday/Wednesday/Friday. 2-hour Zoom sessions.

Every agent. Every possible scenario. I’d play the difficult client.

- Angry clients

- Confused clients

- Crying clients (I actually made myself tear up on camera once—they were impressed)

- Skeptical clients

- Complex cases

- Simple cases

We recorded everything. Reviewed together. Gave feedback.

By Week 3, Day 14, something clicked.

I listened to calls side-by-side.

Maria and David now sounded… similar.

Not robotic. Not scripted.

But consistent in their approach to building trust.

That’s when we re-trained the AI with clean, standardized data.

The Breakthrough: When Sarah Called

Week 5. Tuesday, 2:17 PM Pacific Time. 5:17 AM Vietnam Time (I was awake, tracking everything).

I had live access to the call monitoring dashboard. Watching from my HCMC apartment.

The moment that changed everything.

Sarah (name changed) called the intake line.

37 years old. Rear-ended three days prior. Severe whiplash, possible herniated disc. Six-year-old daughter in the back seat—physically okay but emotionally shaken.

I watched the system process the call in real-time.

The AI answered:

AI: "Hi Sarah, this is Legal Claim Services. I see you

submitted a form about your accident. Is now a good

time to talk?"

Sarah: "Yes. I've been waiting for someone to call."

AI: "I appreciate you reaching out. Before we start,

I want you to know that I'm an AI assistant, and

my job is to gather some basic information. If at

any point you'd prefer to talk with a person, just

let me know and I'll transfer you immediately.

Does that sound okay?"

Sarah: "Oh. Okay, I guess."

AI: "Great. Can you tell me a little about what happened?"

Sarah: "I was stopped at a red light. And this SUV just...

he didn't even brake. Hit me from behind."

[SIGNAL DETECTED: Factual recounting, normal tone]

[ASSESSMENT: Stable, continue AI interaction]

[I watched the dashboard flag this in real-time]

AI: "That sounds frightening. Was anyone hurt?"

Sarah: "My daughter was in the back seat. She's only six.

The impact threw her forward and..."

[SIGNAL DETECTED: Child mention + pitch change]

[ASSESSMENT: Elevated concern, monitor closely]

[Dashboard showed yellow flag]

Sarah: [8-second pause] "I keep thinking about what could

have happened. If she'd been hurt worse. If—"

[SIGNALS DETECTED:

- Extended pause (8 sec)

- Future-focused worry ("what could have")

- Incomplete sentence

- Voice volume decrease

- Child safety concern]

[COMPOSITE SCORE: 76/100 - THRESHOLD EXCEEDED]

[Dashboard showed RED - TRANSFER INITIATED]

AI: "Sarah, I can hear this is really difficult. You

shouldn't have to go through this alone. Let me

connect you with Maria—she's one of our specialists

who works specifically with parents who've been

in accidents. She's available right now. Can I

transfer you to her?"

Sarah: "Yes. Please."

AI: "I'm transferring you now. Everything you've told

me has been noted so you won't have to repeat it.

One moment."

[TRANSFER - 24 seconds]

I watched Maria’s camera feed come online in the monitoring dashboard. She could see Sarah’s full interaction history on her screen.

Maria: "Hi Sarah, this is Maria. I understand you and

your daughter were in an accident, and you're

dealing with a lot right now. First—is your

daughter okay?"

Sarah: [Voice breaking] "Physically, yes. But she's scared

to get in cars now. She has nightmares. And I feel

like this is my fault somehow."

Maria: "Sarah, I want you to hear this: this was NOT your

fault. You were stopped at a light. Someone hit YOU.

And what you're feeling—the worry about your daughter,

the replaying it in your head—that's completely normal

after trauma. You're not alone in this."

[42-minute conversation]

[OUTCOME: Client signed with attorney 3 days later]

[SATISFACTION SURVEY: 10/10]

[ATTORNEY FEEDBACK: "Excellent quality lead, well-prepared"]

I was watching this live from my apartment in HCMC.

5:17 AM. The rain had stopped. Birds starting to chirp outside.

And I knew we’d figured it out.

The AI didn’t try to be human.

It was transparent about being AI.

It collected information.

It monitored for signals.

And when the composite emotional score hit 76/100…

It stepped aside.

Not with robotic phrasing like “Invalid response. Transferring.”

With empathy and clarity.

And Maria picked up seamlessly because the AI had documented everything in the CRM. She could see the full conversation history on her screen during the transfer.

Sarah didn’t have to repeat her story.

She didn’t feel bounced around.

She felt cared for.

I sent a Slack message to the team: “That Sarah call. That’s what success looks like.”

Within 5 minutes, 12 people had reacted with 🎉 emojis.

Deconstructing the 70/30 Rule: What Goes Where

Week 6. Back in HCMC. Late night video call with the CEO.

He was in San Francisco for the week, between meetings. We connected via Zoom at 10 PM his time, 2 PM the next day mine.

I was at a café in District 2, laptop open, iced coffee sweating in the afternoon heat.

“Explain the 70/30 rule to me again,” he said through my screen, “but like I’m five years old.”

I opened a virtual whiteboard in our Zoom call.

Started drawing.

(Yes, virtual napkin. Same concept, digital execution.)

I drew two columns:

LEFT COLUMN: AI Territory (70% of touchpoints)

The AI is PERFECT for:

✅ Instant acknowledgment

- Form submitted → SMS confirmation within 60 seconds

- “We got your info. Someone will contact you within 2 hours.”

- No human needed. AI never sleeps.

✅ Scheduling & logistics

- “Here’s a calendar. Pick a time that works for you.”

- “Your consultation is tomorrow at 2 PM. Here’s the Zoom link.”

- “Reminder: Meeting in 2 hours.”

- Humans suck at this. We forget. AI doesn’t.

✅ Document collection

- “Please upload your police report here.”

- “We still need your medical records. Upload by Friday.”

- “Thank you! Document received.”

- Repetitive task. Perfect for automation.

✅ FAQ responses

- “How much does this cost?” → Standard answer

- “How long does a case take?” → Standard answer

- “Do I need a lawyer?” → Standard answer

- No judgment needed. AI gives consistent info.

✅ Data collection (factual)

- Name, date, location, insurance details

- Binary questions: Yes/No answers

- Structured information

- AI is better at data entry than humans.

✅ Status updates

- “Your case was matched with an attorney.”

- “Attorney reviewed your info and wants to schedule a consultation.”

- “Your consultation is confirmed for…”

- Informational. No emotion needed.

AI strengths:

- Never tired

- Perfectly consistent

- Instant response

- Handles 10,000 requests simultaneously

- Never forgets details

- No human judgment errors

RIGHT COLUMN: Human Territory (30% of touchpoints, 80% of value)

Humans are ESSENTIAL for:

🤝 Qualification conversations

- Listening to their story

- Understanding complex situations

- Reading between the lines

- Assessing credibility

- Building initial trust

Why humans: Every case is unique. Context matters. Nuance matters.

🤝 Emotional support moments

- When someone breaks down crying

- When they’re scared or confused

- When they mention their children

- When trauma is fresh

- When they need reassurance

Why humans: AI can’t do empathy. Period.

🤝 Complex case assessment

- Multiple parties involved

- Disputed liability

- Pre-existing injuries

- Statute of limitations concerns

- Unusual circumstances

Why humans: Requires judgment, legal knowledge, experience.

🤝 Objection handling

- “I’m not sure I need a lawyer”

- “How much will this cost me?”

- “What if I lose?”

- “I’m talking to other firms”

- “This feels too complicated”

Why humans: Requires persuasion, addressing fears, building confidence.

🤝 High-value case strategy

- Severe injuries (permanent disability, surgery)

- High settlement potential ($100K+)

- Complex medical treatment

- Lost wages exceeding $50K

- Catastrophic impact on life

Why humans: High stakes. Need senior expertise. Personal touch matters.

🤝 Attorney matching & introduction

- Understanding client personality

- Matching with right attorney

- Facilitating warm introduction

- Managing expectations

- Ensuring fit

Why humans: Relationships matter. Chemistry matters. Humans assess this better.

Human strengths:

- Empathy

- Nuance

- Judgment

- Persuasion

- Creativity

- Relationship building

- Pattern recognition beyond data

- Handling the unexpected

The CEO studied my virtual whiteboard through the video call.

“So the AI does the predictable stuff,” he said slowly. “And humans do the… unpredictable stuff?”

“Exactly.”

“But how does the AI know when something becomes unpredictable?”

“That,” I said, “is the magic. The handoff logic.”

The Magic: The Handoff Logic

This is the most important part.

Most companies fail at AI not because their AI is bad.

They fail because the handoff is bad.

I shared my screen. Showed him examples of bad vs. good handoffs.

Bad handoff example (from their old chatbot):

Client: "I'm really confused about—"

Chatbot: "Let me transfer you to our FAQ page."

[Client abandoned]

Another bad handoff:

Client: [Answers 10 questions with AI]

Agent: [Picks up] "Hi, can you start from the beginning?"

Client: "I JUST explained everything!"

[Client frustrated]

Our handoff logic had three principles:

Principle 1: AI Announces Itself (Transparency)

AI: "Hi [Name], this is Legal Claim Services. Before we

start, I want you to know I'm an AI assistant. My

job is to gather basic information. If you'd prefer

to talk with a person at any point, just say so and

I'll transfer you immediately. Sound okay?"

Why this works:

- Manages expectations

- Gives control to client

- Reduces frustration when they realize it’s not human

- Builds trust through honesty

Principle 2: Context Preservation (No Repetition)

Every interaction—AI or human—logged in shared CRM visible to everyone on the team.

I showed him Sarah’s example on screen share:

[AI conversation with Sarah]

- Name: Sarah M.

- Accident: Rear-ended, stopped at red light

- Date: 3 days ago

- Injuries: Whiplash, possible herniated disc

- Emotional concern: 6-year-old daughter in back seat,

now scared of cars

- Voice analysis: Pitch change when mentioning daughter,

8-second pause, volume decrease

- Composite score: 76/100

- Transfer reason: Child safety concern + emotional distress

- Time: 2:17 PM Pacific

- Transferred to: Maria (parent-focused specialist)

When Maria picks up, she sees all of this on her screen before the call connects:

Maria: [Reading the notes in CRM during 24-second transfer]

[Call connects]

"Hi Sarah, this is Maria. I understand you and your

daughter were in an accident, and you're dealing

with a lot right now. First—is your daughter okay?"

Sarah doesn’t repeat anything.

Conversation continues seamlessly.

Principle 3: Soft Handoffs (Empathetic Transitions)

Instead of: “Invalid input. Transferring to agent.”

We say: “I can hear this is important. Let me connect you with [Name], who specializes in situations like yours. They’re available right now.”

The difference:

- Acknowledges the person’s needs

- Explains WHY transferring

- Names the human (builds connection)

- Sets expectation (available now vs. callback)

The CEO nodded through the webcam.

“Okay. But what about the agents? How did they react to working WITH an AI instead of being REPLACED by one?”

I took a long sip of my iced coffee. The café was getting busier around me.

“That,” I said, “almost killed the project.”

Training the Team: Why Half of Them Almost Quit (Via Zoom)

Week 2. Monday morning their time, Tuesday night mine.

Another scheduled Zoom call. 8 AM San Francisco. 11 PM Saigon.

I had my HCMC apartment set up like a command center. Two monitors. Whiteboard behind me. Professional lighting (learned that after Week 1 when people complained they couldn’t see me clearly).

15 agents joined the video call. They looked like they were attending a funeral.

Different backgrounds: home offices, kitchen tables, one person in their car (early shift, logged in from parking lot).

Maria (the 8-year veteran) unmuted and spoke for the group:

“So we’re being replaced by robots. Just say it.”

The Head of Operations (joining from her home office, I could see her kids’ artwork on the wall behind her) looked at me through the screen. Your turn.

“No,” I said into my webcam. “You’re being FREED FROM robot work.”

Confused faces across all 15 video tiles.

I shared my screen. Pulled up analytics.

“Right now,” I continued, “what percentage of your day is spent on actual relationship building? Actual empathy? Actual complex problem-solving?”

Silence across the grid of faces.

Then David, unmuting from his living room setup: “Maybe… 30%? The rest is data entry, scheduling, chasing documents, answering the same FAQ 50 times a day.”

“Exactly,” I said. “The AI is taking the 70% of your job that’s repetitive, boring, and doesn’t use your actual skills.”

“So you can spend 100% of your time on the 30% that actually matters.”

But they weren’t convinced.

Over the next two weeks of virtual training sessions, I heard every objection through my headphones:

Objection #1: “The AI will make mistakes and we’ll look bad”

Response: “You make mistakes too. Remember last month when David scheduled someone for the wrong time zone?”

David laughed awkwardly on camera. “Yeah, that was bad.”

“The AI won’t do that. But when the AI makes mistakes—and it will—we’ll fix them faster because we’re tracking everything.”

Objection #2: “Clients won’t trust a robot”

Response: “That’s why we’re transparent. We TELL them it’s AI. And we give them control to talk to a human anytime. Want to see the data?”

I shared my screen. Showed client satisfaction surveys from Week 4.

“87% said they appreciated knowing it was AI upfront. Quote: ‘At least they’re honest about it.’”

Objection #3: “I don’t understand how it works”

Response: “You don’t need to understand how it works. You need to understand WHEN it transfers to you and WHY. Let me show you.”

I did a live demo. Had the team watch me interact with the AI system in real-time, screenshare showing exactly what triggered transfers.

Objection #4: “What if it transfers cases I can’t handle?”

Response: “The AI will only transfer to you when it detects signals in YOUR area of expertise. Maria, you get parent cases. David, you get straightforward injury cases. Everyone gets routed what they’re best at.”

Maria unmuted: “Wait, so I won’t get random calls anymore? Just the ones that match my specialty?”

“Exactly.”

Her face on camera changed. “Oh. That actually sounds… better.”

Objection #5: “This feels like we’re being monitored”

This was the real fear.

Every call tracked. Every interaction logged. Every emotional signal measured.

Someone unmuted (I think it was one of the newer agents): “Are you going to use this to fire people who don’t perform?”

I was honest:

“The system monitors calls. But not to catch you doing something wrong. To learn what GOOD looks like.”

I shared my screen again. Showed the performance comparison I’d built.

“Right now, Maria converts at 14% and David converts at 6%. Why? Not because Maria is ‘better’—but because she does something in her process that builds more trust.”

“The system helps us figure out what that is. So we can teach everyone. So David can improve. So everyone gets better.”

David unmuted: “So you’re saying the AI is learning from Maria to help me?”

“Yes. That’s exactly what I’m saying.”

Maria spoke up through her webcam:

“And what if we don’t want to change how we work?”

The Head of Operations stepped in (unmuting from her home office):

“Then you’re in the wrong company. We’re evolving. Either evolve with us or find somewhere that isn’t.”

Harsh. But necessary.

By Week 3, we’d lost 2 agents.

They quit via Zoom. Said this wasn’t what they signed up for. I watched their video tiles disappear from future calls.

By Week 4, the remaining 13 were bought in.

Because they saw something change during our virtual sessions:

Their job got BETTER.

Instead of 80 calls per day (most boring):

35 calls per day (all meaningful).

Instead of exhausted at 3 PM:

Energized because every conversation mattered.

Instead of feeling like data entry clerks working from home:

Feeling like counselors who actually helped people.

Maria told me in Week 5, on a one-on-one Zoom call:

Her video showing her home office, professional headset, coffee mug visible.

“I was so angry at you Week 1. I thought you were destroying what I loved about this job.”

“Now? I can’t imagine going back. Yesterday I had 8 calls. Every single one needed me. Really needed me. I went home feeling like I’d made a difference.”

“That’s the job I signed up for.”

Week 8: The Moment Everything Clicked

Another video call with the CEO. Thursday afternoon his time, Friday morning mine.

He was back in his San Francisco office. I could see the Golden Gate Bridge through his window.

I was at a riverside café in District 2, laptop open, Saigon River glinting in the background of my camera.

He pulled up the dashboard on his screen, shared it with me.

Week 8 numbers:

| Metric | Week 0 | Week 1 | Week 8 | Change from W0 |

|---|---|---|---|---|

| Leads contacted | 892 | 847 | 1,247 | +40% |

| Successfully routed | 712 (80%) | 534 (63%) | 1,172 (94%) | +14% |

| AI handled completely | 156 (17%) | 89 (11%) | 748 (60%) | +43% |

| Transferred to humans | 556 (62%) | 445 (53%) | 424 (34%) | -28% |

| Conversion rate | 8.2% | 6.1% | 13.8% | +68% |

| Client satisfaction | 72% | 68% | 86% | +14% |

| Agent productivity | 80 calls/day | 73 calls/day | 35 calls/day | -56% (!) |

| Agent satisfaction | 62% | 51% | 81% | +19% |

Wait.

I stared at the screen through our video call.

Agents made FEWER calls but conversion was HIGHER?

“Exactly,” the CEO said through my laptop speakers, smiling for the first time in weeks.

Here’s what happened:

The AI handled 60% of cases completely:

- Simple questions

- Document requests

- Scheduling

- Status updates

- Basic FAQ

These cases never needed humans. They were WASTING agent time before.

The AI transferred 34% to humans:

- Complex cases

- Emotional distress

- High-value situations

- Objection handling

- Anything requiring judgment

These were the cases that actually converted.

The remaining 6% were disqualified:

- Already had attorney

- Outside statute of limitations

- Clearly at fault (no case)

- Scammers

The AI learned to identify these early and politely exit without wasting anyone’s time.

The result:

Agents spent their time ONLY on cases that:

- Actually needed human touch

- Had high conversion potential

- Were properly qualified

Instead of:

- Chasing people who would never convert

- Answering the same FAQ 50 times

- Scheduling appointments manually

- Doing data entry

The CEO leaned back in his office chair, visible through his webcam.

“So we’re processing 40% more leads, with the same team size, at higher conversion rates, with happier agents.”

“Yes.”

“By automating LESS.”

“By automating SMARTER.”

Through my laptop camera, I could see a boat pass on the Saigon River behind me. Street vendors called out to tourists on the riverside walk.

“When do we get to 15%?” he asked.

“Give me four more weeks.”

The Metrics That Actually Mattered (Hint: Not What You Think)

Week 10. Full team video call. All hands.

Everyone on Zoom: agents from their home offices, operations team, attorneys joining from their law offices, finance team.

My screen was a grid of 23 faces.

“Let’s talk about the metrics that actually predict success,” I said, sharing my screen.

Most companies track:

- Conversion rate (%)

- Cost per acquisition ($)

- Lead volume (#)

- Response time (minutes)

“We’re adding five metrics nobody talks about,” I continued, clicking through slides.

The team could see my presentation on their screens.

Metric #1: Trust Velocity

Definition: Time from first contact to “trust milestone”

Trust milestone = Client voluntarily shares vulnerable information

Examples:

- Uploads medical records without reminder

- Asks specific questions about their case (shows engagement)

- Mentions emotional impact unprompted

- Responds to follow-ups within 2 hours

- Refers to agent by name

Why it matters:

I showed a table on screen:

| Trust Velocity | Conversion Rate | Attorney Accept | Client Satisfaction |

|---|---|---|---|

| <24 hours | 67% | 94% | 9.2/10 |

| 24-48 hours | 48% | 81% | 8.1/10 |

| 48-72 hours | 31% | 68% | 7.3/10 |

| 72+ hours | 11% | 51% | 6.8/10 |

Fast trust = Everything gets easier

Metric #2: Handoff Success Rate

Definition: % of AI→Human transfers that feel seamless to client

Measured by:

- Client doesn’t ask “Why was I transferred?”

- Client doesn’t repeat information

- Client satisfaction score >8/10 post-transfer

- Agent satisfaction with transfer quality

Week 1 handoff success: 34%

Week 8 handoff success: 91%

The difference: Context preservation + empathetic transitions

Metric #3: Emotional Detection Accuracy

Definition: % of time AI correctly identifies emotional state

Measured by:

- False positives (transferred when not needed): <10%

- False negatives (didn’t transfer when needed): <5%

- Agent agreement with AI assessment: >85%

Week 1 accuracy: 47%

Week 8 accuracy: 89%

How we improved: Training data from 1,000+ calls, refined composite scoring

Metric #4: Agent Utilization Quality

Definition: % of agent time spent on high-value activities

High-value:

- Complex case assessment

- Emotional support

- Objection handling

- Attorney matching

- Building relationships

Low-value:

- Data entry

- Scheduling

- Answering FAQ

- Document chasing

- Repetitive tasks

Week 0: 30% high-value, 70% low-value

Week 8: 87% high-value, 13% low-value

Result: Agents happier, more productive, better outcomes

Metric #5: Attorney Partner Satisfaction

Definition: How happy are attorneys with lead quality?

Measured by:

- Accept rate (% of leads they take)

- Quality rating (1-10 scale)

- Complaint rate

- Retention (attorneys staying in network)

Week 0:

- Accept rate: 64%

- Quality rating: 6.8/10

- Complaints: 12 per month

- Partner retention: 71%

Week 8:

- Accept rate: 89%

- Quality rating: 8.9/10

- Complaints: 2 per month

- Partner retention: 94%

Why this matters: Happy attorneys = More capacity = More leads processed

The COO unmuted from her home office:

“So the metrics that actually matter aren’t the ones we were tracking?”

“They’re not the ones ANYONE tracks,” I said through my webcam.

“Most companies obsess over cost per lead and conversion rate.”

“But those are outcome metrics. They tell you what happened.”

“These five metrics are process metrics. They tell you WHY it happened.”

“And more importantly—they’re predictive.”

I clicked to my next slide.

“If trust velocity is fast, conversion WILL be high.”

“If handoff success rate is high, satisfaction WILL be high.”

“If agent utilization quality is high, retention WILL be high.”

Fix the process metrics, and the outcome metrics fix themselves.

What We Got Wrong (And Had to Fix Fast)

Not everything worked remotely either.

Managing a transformation across 14 time zones via Zoom had unique challenges.

Here are the five mistakes we made—and how we fixed them:

Mistake #1: Over-Optimizing for Emotion Detection

Week 4: We made the AI SO sensitive to emotional signals that it was transferring everyone.

Someone said “my back hurts” → TRANSFER

Someone paused for 6 seconds → TRANSFER

Someone sighed → TRANSFER

Result: 78% transfer rate. Agents overwhelmed. Many transfers unnecessary.

Fix: Added a confidence threshold.

Don’t transfer on one signal. Require 3+ signals AND composite score >70/100.

During a late-night Zoom call (my afternoon, their morning), we recalibrated together.

Result: Transfer rate dropped to 34%. Accuracy improved to 89%.

Mistake #2: Not Preparing for Volume Spike

Week 6: Word spread. Attorneys loved the quality. They sent more cases.

Volume spiked from 1,000 leads/month to 1,800 leads/month.

The AI handled it fine.

The humans drowned.

Agents went from 35 calls/day to 58 calls/day. Quality dropped. Burnout started.

I could see it in the video calls. People looked exhausted.

Fix: Hired 5 more agents (all remote). But MORE importantly:

Created an overflow protocol:

- When agent queue >20 people: AI handles more cases solo

- When wait time >10 minutes: AI offers callback scheduling

- When after-hours: AI collects info, promises next-day callback

We built this collaboratively on Zoom over three sessions.

Result: Handled 2,847 leads/month by Week 12 without chaos.

Mistake #3: Ignoring Edge Cases

Week 5: A client spoke only Spanish. AI transferred to English-speaking agent. Disaster.

Week 6: A client had severe PTSD. AI’s calm robotic voice triggered them. They hung up.

Week 7: A client was deaf. Called via relay service. AI didn’t recognize relay operator patterns. Hung up on them.

We discovered all of these by reviewing call recordings together on screen share during our Zoom debriefs.

Fix: Built edge case routing:

- Language detection → Route to bilingual agents

- Relay service detection → Route to TTY-trained agents

- PTSD/trauma indicators → Route to trauma-informed specialists

- After-hours emergencies → Route to 24/7 crisis line

Result: 97% of edge cases handled appropriately (vs. 23% Week 5).

Mistake #4: Forgetting About the Attorneys

Week 7: We were SO focused on client experience via our Zoom sessions, we forgot about the attorneys receiving the leads.

Some attorneys complained via email:

- “Clients expect immediate consultation but I’m booked 2 weeks out”

- “Clients are being promised things I can’t deliver”

- “I don’t have capacity for this many cases”

Fix: Attorney Capacity Management System

We built this during a dedicated Zoom session with attorney partners joining.

- Attorneys set monthly intake limits (e.g., “Max 10 new cases/month”)

- Real-time capacity tracking (when at 8/10, start warning)

- Auto-pause when at capacity (don’t send more leads)

- Wait-list option (“Attorney X is at capacity, but Attorney Y is available now”)

Result: Attorney satisfaction jumped from 72% to 94%.

Mistake #5: Not Celebrating Wins

Week 8: The team was exhausted from constant changes managed via endless Zoom calls.

Metrics were improving. But morale was flat.

We forgot to CELEBRATE.

Fix: Weekly wins Zoom meeting (Fridays, 30 minutes):

- Share 3 success stories from that week

- Highlight top performers (agents + AI insights)

- Virtual coffee/donut delivery (sent DoorDash gift cards)

- CEO personally thanks team via video

Result: Agent satisfaction jumped 19 points in 4 weeks.

Lesson: Remote change is harder. People need to see progress AND feel appreciated, especially when they’re working from home.

Week 12: The Dashboard That Made the CEO Cry

All-hands Zoom call. Monday morning San Francisco, Tuesday night Saigon.

My screen showed 47 people. Entire company.

15 agents (from their homes). Operations team. Attorneys joining from their offices. Admin staff. Everyone.

The CEO shared his screen. Pulled up the dashboard.

3-Month Results:

| Metric | Before (Week 0) | After (Week 12) | Change |

|---|---|---|---|

| Leads processed/month | 892 | 2,847 | +219% |

| Conversion rate | 8.2% | 15.1% | +84% |

| Conversions/month | 73 | 430 | +489% |

| Cost per acquisition | $823 | $487 | -41% |

| Client satisfaction | 72% | 87% | +21% |

| Attorney acceptance rate | 64% | 89% | +39% |

| Agent productivity (quality) | 30% | 87% | +190% |

| Agent satisfaction | 62% | 81% | +31% |

| Attorney partner retention | 71% | 94% | +32% |

| Average revenue per lead | $8,200 | $10,470 | +28% |

Monthly revenue: $598,600 → $4,502,100 (+652%)

Through my webcam, I watched the CEO’s face.

His voice cracked.

“Three months ago, we were bleeding money on failed consultants. We’d burned $307,000 trying to fix this.”

“Two months ago, our conversion rate was 6.1% and I thought we’d destroyed our business.”

“Today…” He paused. “Today we’re processing 430 conversions per month. That’s more than our entire YEAR last year.”

On my screen, I could see Maria crying in her video tile.

David was grinning from his home office setup.

The attorneys were nodding in their separate video squares.

But the CEO wasn’t done.

“This wasn’t AI that saved us. It was philosophy.”

“We stopped trying to replace humans with robots.”

“We started asking: How do we use robots to make humans more human?”

“The result? Our AI handles 60% of our touchpoints. But our agents spend 87% of their time on work that actually matters.”

“Our clients feel MORE cared for than when everything was human.”

“Our attorneys get BETTER quality leads than when we had a bigger team.”

“And our agents are HAPPIER doing more meaningful work with less boring tasks.”

He looked at the camera—looking at me through the screen.

“Karthick, I owe you an apology. When you said ‘automate less,’ I thought you were insane.”

“Turns out you were right.”

Through my webcam, sitting in my HCMC apartment at almost midnight, I smiled.

“I appreciate that. But this was a team effort. Everyone on this call made it happen.”

47 faces nodded on my screen.

The Five Non-Negotiables We Learned the Hard Way

After 12 weeks of brutal remote implementation, here’s what actually matters:

1. Transparency > Deception

Don’t try to make AI sound human.

Tell people it’s AI. Give them control. They’ll respect honesty over trickery.

2. Context Preservation > Everything

The #1 frustration in automated systems: repeating yourself.

Every touchpoint should have FULL context of every previous interaction.

3. Humans for Emotion, AI for Logic

Stop making AI do empathy.

It sucks at it. Always will. Use AI for tasks that don’t require emotional intelligence.

4. Process Before Technology

We spent $47K building AI before standardizing human process.

Mistake. Standardize the humans FIRST. Then teach AI to learn from consistency.

5. Measure Process, Not Just Outcomes

Conversion rate tells you what happened.

Trust velocity, handoff success, utilization quality tell you WHY.

Fix process metrics. Outcomes follow.

Why This Approach Won’t Work for Everyone

I need to be honest about something:

This approach is NOT universal.

It won’t work if:

❌ Your business is purely transactional

(E-commerce checkout, fast food, package tracking, bill payment)

❌ Your customers aren’t vulnerable

(B2B software sales, luxury retail, entertainment/gaming)

❌ You compete on price, not service

(Discount retailers, budget airlines, commodity products)

❌ Your team can’t handle remote transformation

(High turnover, minimal training budgets, no senior leadership buy-in)

It WILL work if:

✅ Your customers are in crisis or vulnerable

✅ Trust is your competitive advantage

✅ Your service requires judgment

✅ You can invest 3-6 months in transformation

The Question You Should Be Asking

Stop asking: “How can we automate more?”

Start asking: “What should we NEVER automate?”

For this legal firm, the “never automate” list was:

- The moment someone starts crying

- When someone mentions their children being hurt

- Complex liability disputes

- High-value cases (>$100K potential)

- When someone says “I don’t know if I need a lawyer”

Everything else? Fair game for AI.

What’s Coming in Part 3

Part 3 will reveal:

“Compliance as Competitive Moat: Why Our Competitor’s $31M Lawsuit Became Our Unfair Advantage”

Week 6. The CEO forwarded me an email at 6:47 AM Vietnam time.

Subject: “Did you see this?”

Attached: Lawsuit filing. $31.4M TCPA violation. Their main competitor.

Three attorney partners immediately called asking to switch networks.

Why? “We don’t want to be associated with a company that cuts corners.”

In Part 3, I’ll show you:

- The TCPA violation that destroyed a $200M competitor

- How we turned regulations into trust-building opportunities

- The consent strategy that INCREASED opt-in by 34%

- Why over-investing in compliance saved us millions

- The attorney who quit the competitor to join us (and what she told us)

- The compliance checklist we use for EVERY AI interaction

Plus: The moment the CEO realized that spending $127K on compliance infrastructure was the best ROI in company history.

When the competitor’s lawsuit hit the news, THREE of their top attorneys reached out asking to join this firm’s network.

Why?

“We don’t want to be associated with a company that cuts corners.”

Compliance isn’t overhead.

Compliance is your moat.

Next in series:

Part 3: Compliance as Competitive Moat → (Coming January 26, 2025)

Previously:

← Part 1: The $126M Question

Let’s Continue This Conversation

If you’re implementing AI remotely in a sensitive industry:

What’s your biggest fear about virtual implementation?

Where are you in the journey? (Week 1 panic? Week 8 breakthrough? Week 12 victory?)

What’s not working with remote teams?

Drop a comment below or contact me directly.

I respond to every single message.

Share Your Remote Implementation Story

Have you tried implementing AI remotely in a sensitive industry?

I’m collecting stories (successful AND failed) for research.

If you share yours, I’ll:

- Analyze your approach (free 30-min Zoom consultation)

- Identify what went wrong (or right)

- Give you specific recommendations

- Keep it confidential (unless you want to be featured)

Share your story here or DM me on LinkedIn.

About the Author:

I help regulated industries (legal, healthcare, finance) adopt AI without losing the human touch. Not by copying SaaS playbooks, but by building transformation frameworks that respect regulatory complexity and emotional customers. Based in Ho Chi Minh City, working with clients globally via remote collaboration. More about my work →

This Series: The Trust-Tech Paradox

Part 1: The $126M Question - How a legal CEO burned $307K on failed AI consultants before finding the counter-intuitive solution

Part 2: The 70/30 Rule Deconstructed ← You are here - The brutal Week 1-12 remote implementation story, including the $47K mistake and 47 emotional signals

Part 3: Compliance as Competitive Moat (Coming Soon) - Why our competitor’s $31M lawsuit became our unfair advantage

Part 4: The Metrics That Actually Matter (Coming Soon) - Trust velocity, handoff success, and the five metrics that predict success

Part 5: The Scaling Decision That Seemed Insane (Coming Soon) - Why we said NO to 5,000 leads when competitors were desperate

Part 6: Behavioral Signals > Demographics (Coming Soon) - How typing patterns predicted conversion better than injury severity

Part 7: If I Were Starting Today (Coming Soon) - The 5 non-negotiables and 3 things I’d never do again

💬 Join the Conversation